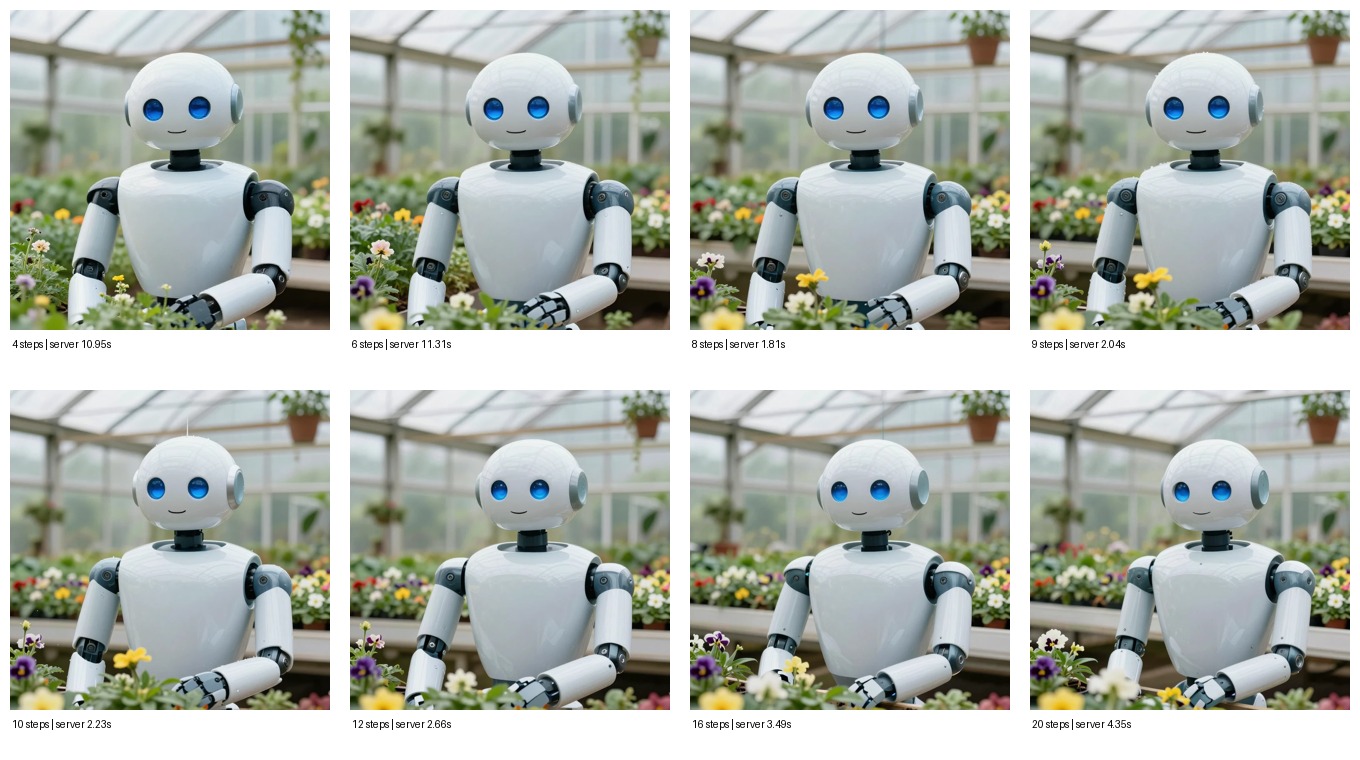

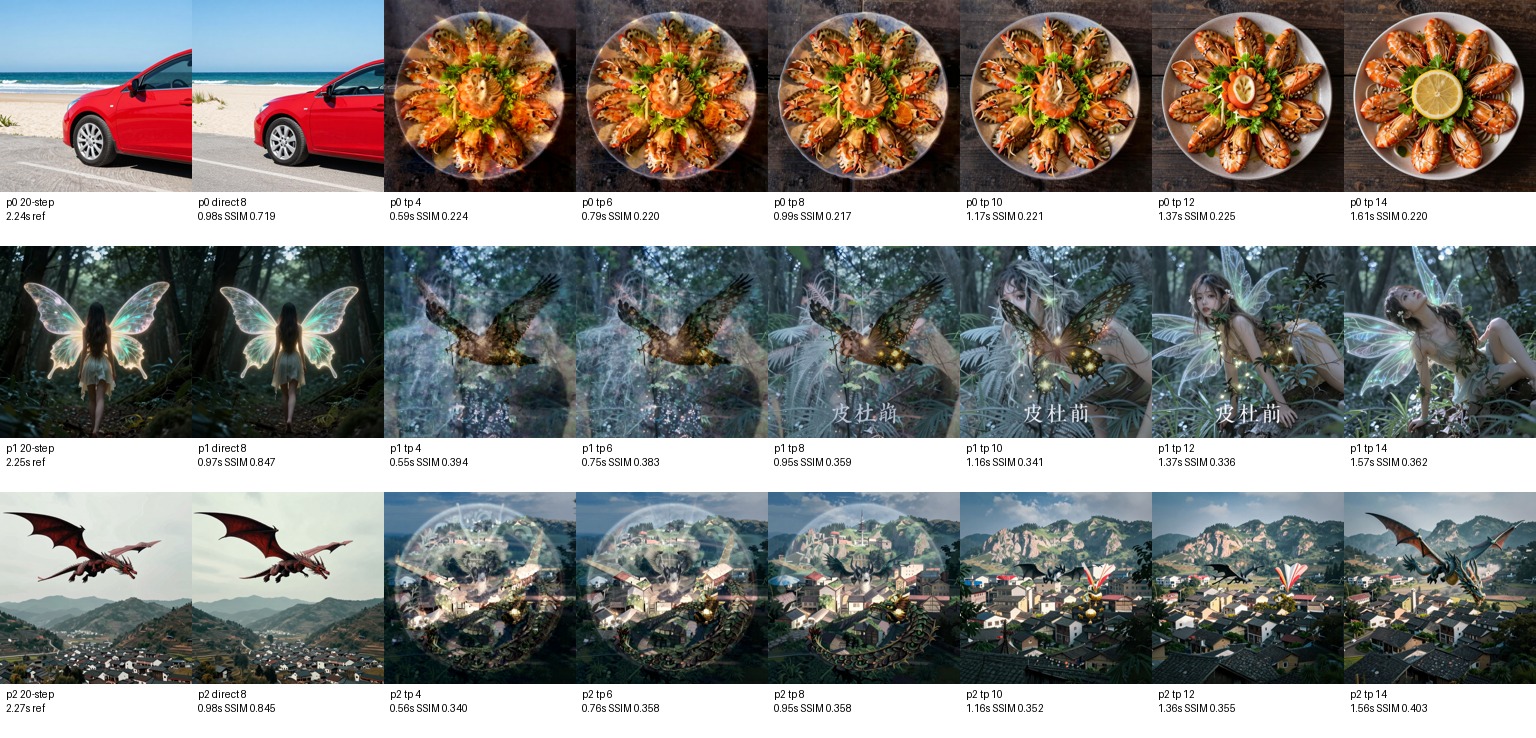

Step Count Sweep

4, 6, 8, 9, 10, 12, 16, 20 steps

Best quality/time knee. 4-6 are fast but visibly thinner; 12+ adds little for normal prompts.

Try next: Try 6 steps for drafts, 8 for production, 12 only when detail is visibly missing.

Performance benchmarks for every CuteDSL-accelerated model and a breakdown of each acceleration technique we're researching and shipping.

Side-by-side image sweeps showing what each setting changes and how much latency it costs

4, 6, 8, 9, 10, 12, 16, 20 steps

Best quality/time knee. 4-6 are fast but visibly thinner; 12+ adds little for normal prompts.

Try next: Try 6 steps for drafts, 8 for production, 12 only when detail is visibly missing.

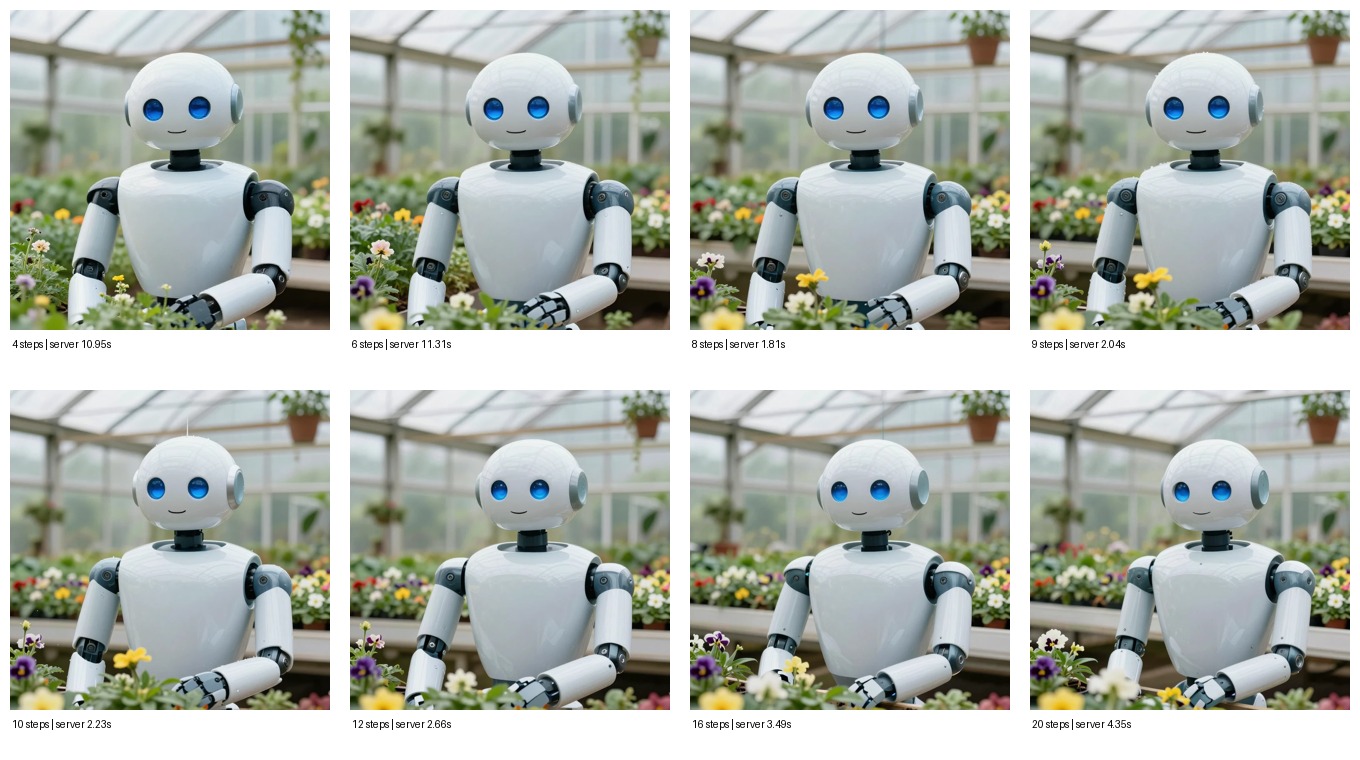

6, 7, 8, 9, 10 steps

The jump from 7 to 8 is usually worth it; 9-10 is mostly polish.

Try next: Use this range when tuning API presets or adding a fast/quality toggle.

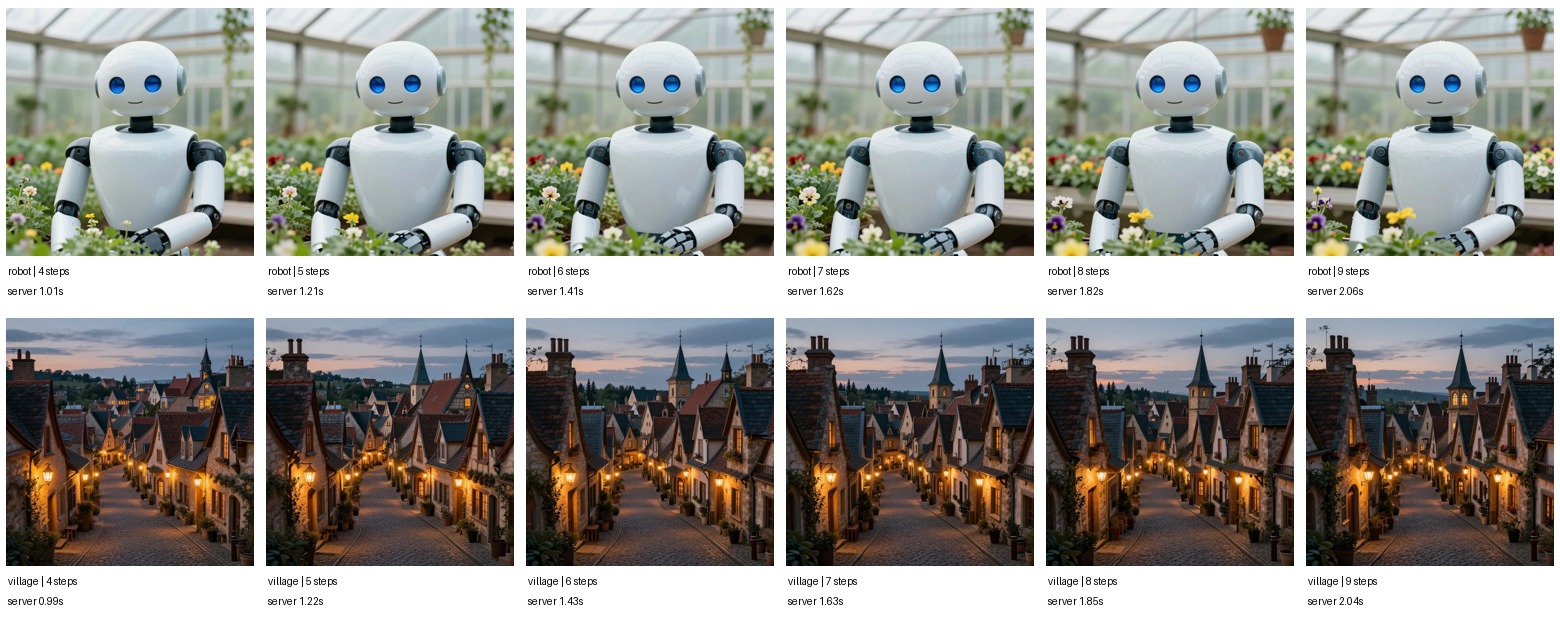

Start steps 1-7 at 8 total steps

All replay settings were pixel-identical; later start wins because it reruns less denoising.

Try next: Keep exact-prompt cache hits at step 7 and track warm replay latency separately from first-run compile latency.

4, 6, 8, 10, 12, 14 refinement steps

Fast, but quality is not yet competitive with direct 8-step Z-Image.

Try next: Try better cache matching and learned combiners before exposing this path to users.

SOTA time series forecasting — RTX 5090, B=1, context=768, base model (768 d_model, 12 layers), H=16

| Configuration | Mode | Latency | Speedup | Memory |

|---|---|---|---|---|

| Original Chronos2Pipeline | Baseline | 41.98 ms | 1.0x | 248 MB |

| CuteChronos2 (eager) | Eager | 19.15 ms | 2.19x | 247 MB |

| CuteChronos2 (torch.compile) | Compiled | 1.55 ms | 27.1x | 237 MB |

| CuteChronos2 + TQ4 (compiled) | Quantized | 16.40 ms | 2.56x | ~200 MB |

triton_kernels/attention.pyFlashAttention-style tiling without 1/sqrt(d_k) scaling. Avoids materializing S×S attention matrix. FP32 softmax for numerical stability.

triton_kernels/rms_layernorm.pyT5-style RMS normalization in a single Triton kernel. FP32 variance computation, no mean subtraction.

triton_kernels/rope.pyRotary Position Embeddings fused into one kernel: inv_freq computation + cos/sin + Q/K rotation.

triton_kernels/fused_layernorm_linear.pyMerges RMS LayerNorm and linear projection. Eliminates the normalized intermediate tensor entirely.

triton_kernels/fused_mlp.pyTwo-layer MLP with ReLU activation in a single kernel pass. Avoids 3072-wide intermediate buffer allocation.

triton_kernels/fused_output.pyOutput rearrange + sinh + unscale in a single kernel pass over the output tensor.

triton_kernels/fused_preprocess.pyNaN-aware preprocessing pipeline. Two-phase: shared memory reduction for statistics, then transform.

cpp/preprocessing.cuC++/CUDA NaN-aware normalization and patching with shared memory reductions for per-series statistics.

Z-Image Turbo transformer — 30 layers, dim=3840, 30 heads, SiLU-gated FFN (hidden=10240)

| Configuration | Mode | Latency | Speedup | VRAM |

|---|---|---|---|---|

| Original Z-Image Turbo | Baseline | 105.79 ms | 1.0x | 11,983 MB |

| CuteZImage (eager) | Eager | 93.30 ms | 1.13x | 11,977 MB |

| CuteZImage (torch.compile) | Compiled | 90.99 ms | 1.16x | 11,883 MB |

Output correctness: max_abs_error = 0.0 (bit-exact match with original)

triton_kernels/fused_silu_gate_ffn.pyFuses silu(w1(x)) * w3(x) in a single kernel. Eliminates 10240-wide intermediate allocation that dominates memory bandwidth.

triton_kernels/fused_adaln_norm.pyTimestep conditioning fused with normalization in one pass instead of separate modulation + norm.

triton_kernels/rope_complex.pyFuses reshape + complex multiply + flatten for Z-Image's complex multiplication convention. Avoids intermediate complex tensor allocations.

triton_kernels/rms_norm.pyTriton-accelerated RMS normalization with FP32 variance and optional weight scaling.

csrc/cute_rms_norm.cuVectorized 128-bit loads/stores (bfloat162, half2, float4) for maximum memory bandwidth.

csrc/cute_silu_gate.cuVectorized SiLU activation + gating for float32, float16, and bfloat16 with coalesced memory access.

triton_kernels/fused_qkv_norm_rope.pyCombined QKV projection, normalization, and RoPE in a single multi-operation fusion kernel.

End-to-end generation latency — Python server vs Go+C migration — RTX 5090, 9-step Z-Image Turbo

| Configuration | Mode | Latency | Speedup | Memory |

|---|---|---|---|---|

| Python (CPU offload) | Eager | ~32,000 ms | 1.0x | ~7 GB |

| Python (GPU resident) | Eager | ~31,500 ms | 1.02x | ~18 GB |

| Python + CuteDSL Kernels | Fused | ~30,000 ms | 1.07x | ~18 GB |

| Go+C (Python embed) | CGO Bridge | ~31,200 ms | 1.03x | ~18 GB |

| Go+C (LibTorch native) | Projected | ~100 ms | ~320x | ~14 GB |

| Go+C + NVFP4 | Projected | ~60 ms | ~533x | ~8 GB |

Note: Current ~32s latency is dominated by Z-Image Turbo's 9-step diffusion loop, not Python overhead. The projected LibTorch numbers assume direct CUDA execution without Python/torch runtime dispatch.

Semantic LoRA selection — keyword matching vs embedding similarity (gobed)

| Engine | Latency | Accuracy |

|---|---|---|

| Keyword (Python) | <0.1 ms | Good |

| Embedding (Python, sentence-transformers) | 4 ms | Excellent |

| Embedding (Go, gobed) | <1 ms | Excellent |

| Embedding + Negative (Python) | 5 ms | Best |

| Embedding + Negative (Go, gobed) | <1 ms | Best |

MAE vs latency across quantization configs — 7 symbols, context=768

| Config | Compression | H=16 Latency | H=16 MAE | H=64 Latency | H=64 MAE | H=128 Latency | H=128 MAE |

|---|---|---|---|---|---|---|---|

| Original PyTorch | 1.0x | 41.98 ms | 1645.1 | 39.78 ms | 4768.5 | 38.96 ms | 6497.4 |

| CuteChronos2 Eager | 1.0x | 19.15 ms | 970.8 | 18.47 ms | 1496.3 | 20.77 ms | 1441.1 |

| CuteChronos2 Compiled | 1.0x | 1.55 ms | 943.4 | 1.65 ms | 1499.7 | 1.63 ms | 1461.0 |

| TQ4 (product+MSE) | 3.66x | 150.79 ms | 970.7 | 124.96 ms | 1471.9 | 123.35 ms | 1474.1 |

| TQ4 + Compiled | 3.66x | 16.40 ms | 966.1 | 22.96 ms | 1498.0 | 20.61 ms | 1467.0 |

| TQ3 (product+MSE) | 4.74x | 346.47 ms | 981.8 | 343.58 ms | 1665.1 | 344.55 ms | 2045.3 |

| Config | Sequential Total | Per Window | Batched Total | Batched/Window | MAE |

|---|---|---|---|---|---|

| Original PyTorch | 18,041 ms | 40.27 ms | N/A | N/A | 4263.2 |

| CuteChronos2 Eager | 11,439 ms | 25.53 ms | 400.6 ms | 0.89 ms | 317.8 |

| CuteChronos2 Compiled | 753.2 ms | 1.68 ms | 52.3 ms | 0.117 ms | 317.2 |

| TQ4 + Compiled | 9,312 ms | 20.78 ms | 417.3 ms | 0.93 ms | 320.2 |

Rolling forecast: 448 sequential 1-step predictions over 7 crypto/equity symbols (BTC, ETH, SOL, TSLA, AAPL, NVDA, LINK)

Everything we're building, researching, and shipping to make models faster

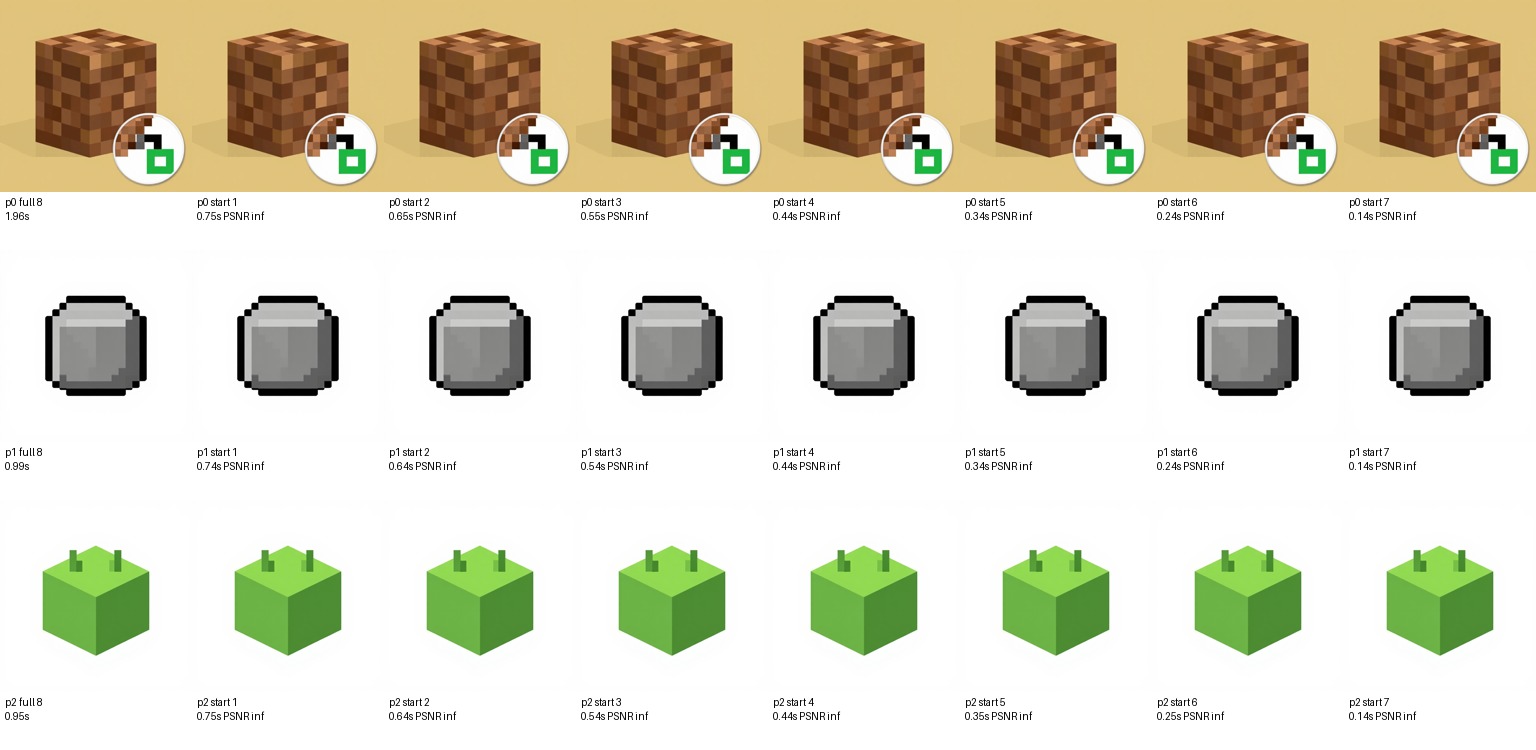

latentteleport/Pre-compute and cache intermediate diffusion latents for common prompt patterns. Interpolate between cached latents using SLERP or neural combiners, then refine from the interpolated latent with fewer denoising steps. Reduces effective diffusion steps from ~20 to ~5.

tubroquant/Vector quantization for KV cache and attention optimization. MSE and product quantization modes with Hadamard rotation for improved compression. 2-16x compression with configurable bit-widths.

cutechronos/model.pyCaptures the full forward pass as a CUDA graph via torch.compile with reduce-overhead mode. Eliminates kernel launch overhead entirely. Requires fixed input shapes. Achieves 24.4x speedup on CuteChronos2.

inference/server.py4-bit floating point quantization for RTX 5090 Blackwell architecture via torchao. Per-block scaling gives ~2x memory reduction and faster matmuls on SM100+ GPUs.

cutechronos/, cutezimage/Fuse multiple operations into single Triton/CUDA kernels to eliminate intermediate tensor allocations and reduce memory bandwidth pressure. Core technique across all CuteDSL modules.

cutezimage/csrc/128-bit vectorized loads and stores using bfloat162, half2, and float4 types. Maximizes memory bandwidth utilization on modern GPUs.

diffusionz/Pure C++ stable diffusion inference using GGML-style quantization. Goal: run diffusion models on CPU or with minimal GPU, similar to how llama.cpp democratized LLM inference. DiffusionZ C API (diffusionz_c_api.h) is implemented with LibTorch backend; evaluating stable-diffusion.cpp as an alternative lighter backend.

Internal structure of the models we accelerate